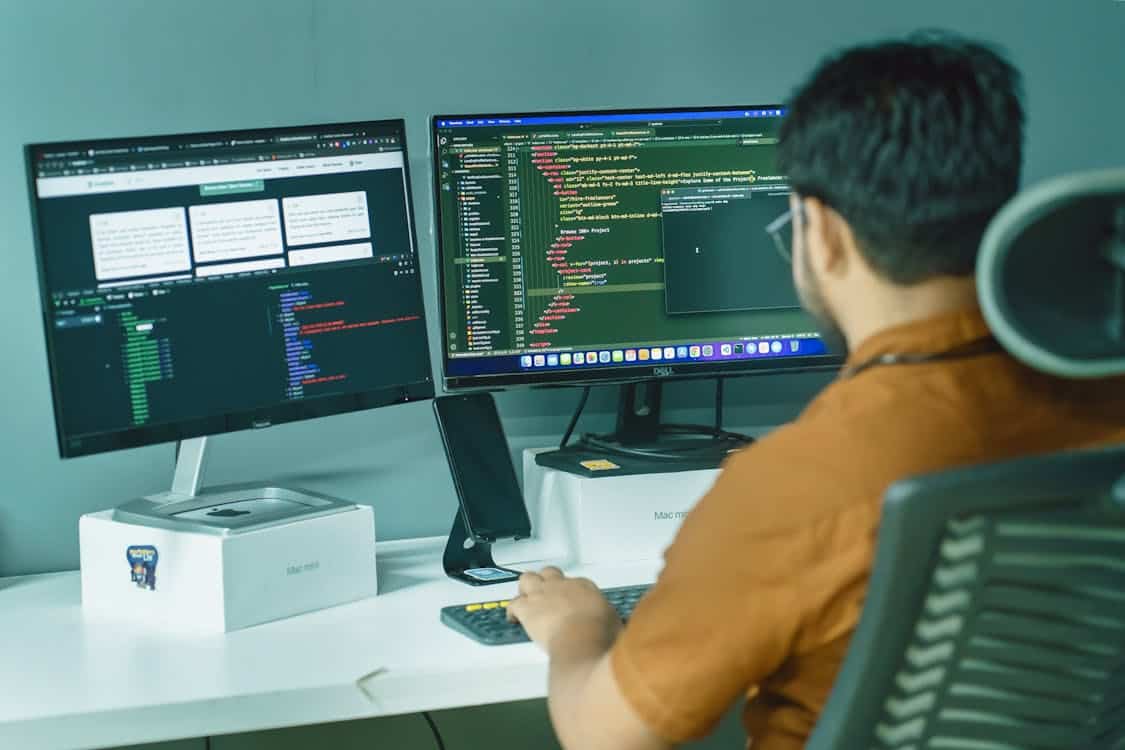

Software development is undergoing a marked shift as statistical models and learning systems enter everyday workflows. Developers now have pragmatic helpers that take on repetitive chores and propose novel approaches, freeing human attention for bigger design problems.

The net effect is faster iteration cycles, more predictable releases and a higher bar for what automation can offer in terms of quality and speed. Teams combine machine suggestions with human judgment to craft robust code without losing sight of clarity and intent.

1. AI Code Generation And Completion

Large language models are fluent in many programming languages and can produce non trivial functions from simple natural language requests, meaning that a few clear sentences can yield usable code. Autocompletion in modern editors suggests whole blocks and can stitch helper routines so a developer keeps momentum, lowering the friction of context switching between browser and editor.

These assistants accelerate routine tasks while also nudging teams to think more about design, since time saved on boilerplate can be spent on architecture and testing.

In this evolving landscape, tools such as Blitzy are gaining significant attention for their ability to enhance productivity without disrupting established workflows. Still, generated code must pass the same rigors as authored code, so unit tests and peer review remain essential parts of the pipeline.

Teams leverage code generation to scaffold APIs, translate legacy snippets into current frameworks and generate test stubs that mirror project conventions, which helps bring new features into shape more quickly.

Rapid scaffolding moves prototypes toward production quality faster than manual typing alone, but the process introduces risks when teams accept outputs without verification.

Licensing issues and subtle semantic differences in libraries require careful review and a clear policy for generated artifacts. Best practice blends automation with human ownership and a thin layer of automated checks that flag unusual patterns for engineers to inspect.

2. Automated Testing And Bug Detection

Test generation tools use model driven heuristics to produce unit and integration tests that explore edge conditions a human might skip, increasing coverage for hot code paths. Machine learning applied to execution traces can surface rare failure modes and suggest additional assertions that help lock down behavior across releases.

These systems shorten the feedback loop so regressions are caught earlier, which in turn reduces the cost of fixes and improves developer confidence. Despite the gains, teams must tune false positive thresholds and maintain a curatorial role so the signal to noise ratio stays healthy.

Anomaly detection engines analyze logs, metrics and traces to detect performance drifts and behavioral changes before they impact many users, offering a form of predictive maintenance that complements scheduled checks. When these tools surface suspicious patterns they often include likely root causes and reproduction hints that cut investigation time significantly.

Yet models can overfit or highlight coincidental correlations, which is why human validation remains part of the incident response life cycle. Combining automated insights with traditional observability tooling yields a pragmatic approach to reliability that scales with the code base.

3. Intelligent Code Review And Security Analysis

AI enabled code review tools score changes for style, complexity and potential vulnerabilities, drawing attention to patterns that often escape single pass human review. They identify insecure usage such as improper input handling or missing encryption and pair each finding with contextual examples and remediation suggestions that speed corrective work.

Early detection reduces the blast radius of defects and shortens the window between discovery and remediation, making releases safer and more predictable. Of course these systems produce false positives and need ongoing calibration so teams do not ignore important alerts.

Supply chain analysis driven by machine learning inspects dependency graphs and monitors for abnormal behavior in packages, commits and binaries, helping to catch compromised components before they reach production. Automated advice may include concrete steps like pinning versions, auditing transitive dependencies or reverting suspicious updates to reduce exposure.

Developers combine these automated warnings with manual audits on high risk modules and continuous verification for critical paths. Over time the models adapt to normal patterns in a repository, which improves signal relevance and reduces nuisance notifications.

4. Natural Language Interfaces For Developer Tools

Conversational interfaces let engineers ask for code summaries, find usages and request refactors in everyday language, lowering the barrier to interacting with large code bases and design docs. Rather than hunting through file trees, a developer can state an intent and receive a concise plan or a sample change that respects naming and formatting conventions, which speeds iterative work.

These tools help teams onboard new members faster by making institutional knowledge more accessible without heavy documentation trawling. That said, conversation cannot replace domain expertise and suggested edits should be validated and aligned with team standards before being merged.

When embedded into local editors and continuous integration systems, natural language tools can apply quick fixes, run focused experiments and annotate pull requests with clear action items, keeping context near the code change. Continuous integration annotations that include an AI generated summary and a risk assessment reduce the cognitive load during code review and make decision making faster.

This tight coupling keeps critical information visible to reviewers and reduces back and forth that stalls progress. Teams still need explicit coding conventions and review rituals so the interface complements human coordination rather than becoming a crutch.

5. AI Assisted Deployment And Observability

Operational tooling uses predictive models to anticipate capacity needs and offer scaling recommendations ahead of traffic spikes, which helps teams avoid reactive firefighting and reduces downtime. During incidents these systems correlate traces, logs and metrics to propose likely root causes and remediation steps, narrowing the blast radius and accelerating recovery.

They can also suggest targeted rollbacks or configuration changes that minimize user impact while a deeper fix is prepared. Operators retain final control, and the suggestions serve to cut through noise and focus attention where it has the most effect.

Cost analysis engines powered by machine learning inspect resource usage across environments and propose rightsizing suggestions to trim wasted spend without hurting latency for critical services. By pairing recommendations with policy guards, non critical adjustments can be automated while changes to key services require human approval, which balances efficiency and risk.

These practices reduce operational toil and free engineers to work on features that move the product forward rather than routine maintenance. Continuous monitoring of automated actions is important so teams can spot when an automated decision itself becomes a source of instability.